"From my close observation of writers... they fall into two groups: one, those who bleed copiously and visibly at any bad review, and two, those who bleed copiously and secretly at any bad review." -Isaac Asimov

You'd never know it unless you were one of about six people in the entire world, but today is a landmark anniversary for me. Three years ago, I was on summer break from teaching at my local college, when I got an email from the Royal Astronomical Society in England.

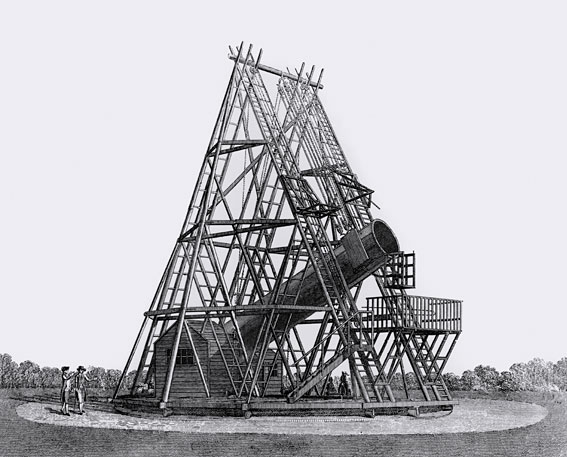

The UK-based society at the forefront of astronomy since 1820, their records actually go all the way back to the founding of calculus! Their journal, Monthly Notices, was the location of my very first published scientific paper, but that wasn't why they were contacting me.

They had a controversial paper under peer review -- something that fell under my area of expertise -- and they asked me if I would step in as an expert referee.

It was a little bit of a surprise to me; I was young, I had only published a handful (or two) of papers, and I'd only refereed maybe three other papers before. I hardly considered myself to be an expert. And yet, impostor syndrome aside, I knew (and know) that I legitimately am an expert in a few particular sub-fields of astrophysics and cosmology.

For those of you just starting out in a scientific career, there's a very good chance that if you've ever published a paper in a peer reviewed journal, you'll be asked to referee a paper for that journal down the road. This is a vital part of the scientific process, and it's what allows experts to separate solid papers that deserve publication, like this one, from highly flawed papers that need significant work before they'd be suitable for it.

But no one ever tells you how to do it, not really.

Image credit: Nick of http://www.lab-initio.com/.

Image credit: Nick of http://www.lab-initio.com/.

Before I get into what I consider the four jobs of you, as a referee, let me tell you a few things I wished that someone had told me (and, especially, had told many of my former referees) to keep in mind:

- What you're reading likely represents the culmination of months or even years of careful planning, execution, analysis, and hard work on the part of the researcher(s) you're reviewing. Respect that.

- Regardless of whether you like the viewpoint expressed in their work, their claims should be addressed in an objective, unbiased manner. This is not to say that you must be unbiased or without opinion; you will have your professional opinions, no doubt, and you should. But you must be aware of any biases you have, and do your best to address their work solely on its own merits.

- Finally, and most importantly, there are going to be flaws that you find in the text, but it is not your sole job to point them out, and it is certainly not your job to belittle the authors for their errors. (No one deserves that.) Be sure to point out what the positives are, what important valid points were made, and what the strengths of the paper are as well.

That's all the basic advice. But what about the meat-and-potatoes of peer review: the actual reviewing of the content of the paper? That's where you -- with all your expertise -- come in with your four jobs. Here they are.

Image credit: LeisureGuy's grandson, via https://leisureguy.wordpress.com/.

Image credit: LeisureGuy's grandson, via https://leisureguy.wordpress.com/.

1.) To verify that the introductory section(s) adequately sets up, explains, and places their work into its appropriate historical and scientific context.

No science is ever done in a vacuum. Even your iconic image of Einstein as a "lone genius" is wildly inaccurate; without the centuries of scientific foundation that work is built upon and grown out of, none of the modern science undertaken today would be possible. There are many perspectives one can take, but the reality is that on most issues, there is a scientific consensus, there are dissenting opinions, there is wiggle-room, and there are important nuances that need to be addressed.

Most importantly, perhaps, there are points of contention surrounding any issue, and presumably this paper is attempting to take a step towards either strengthening or weakening one (or more) of these points. It's important to give the authors a lot of leeway here; this is not the place where new material is introduced. But it is the place to make sure that everyone who deserves credit is given credit for their prior work, and to ensure that the authors are very clear about what the rest of their paper is going to entail.

2.) What are the methods used to obtain and analyze the data presented in this paper? Are they sound? Are there questions about their validity?

The (rough) sections of a scientific paper are the abstract, intro, materials/methods/experiments/observations (depending on the field), results, and conclusions/analysis/discussion, followed by the references. If there are large flaws in the raw data that is gathered, then the results and conclusions are meaningless! If I'm trying to draw conclusions about a position down to angstrom accuracy, but I'm only using a device that can measure distances as finely as 10 nanometers, there's no way that should pass peer review!

The job of the referee here is to make sure that -- to the best of your ability -- that every source of error and uncertainty is quantified and understood as well as possible. (That's why this paper, for example, is absolutely worthless.) To make sure that the appropriate scientific controls are in place. It's also your job to determine whether there's any suspicious (or possibly fraudulent) methodology at work.

Do your best; intentional deception is the toughest of these to catch, but you can do a great service to the scientific community if you keep a scientist (or a team of scientists) from fooling themselves.

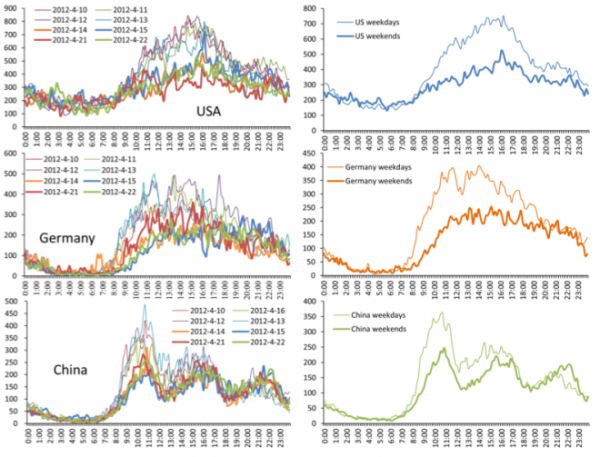

Image credit: Xianwen Wang et al., 2012, via http://arxiv.org/abs/1208.2686.

Image credit: Xianwen Wang et al., 2012, via http://arxiv.org/abs/1208.2686.

3.) Results: are they clear? Has anything been omitted (or included) that shouldn't have been? Is all the data that needs to be there actually there? And does it contain and show all the information that it was designed to?

This is often the easiest section to referee. If you know how something's supposed to work, and they took their data exactly as they said they would (or did their calculations, etc.), are these reasonable results? Are their calculations, curve-fits, etc., repeatable? Are they using well-known, well-understood, easy-enough-to-follow analysis methods?

Basically, based on their methods section, you should be confident that you'd be able to reproduce their results if you did this work, again, yourself. Unless there's a calculational error, a glaring omission, or some other gross mistake, there are rarely catastrophic problems in this section.

And most importantly...

4.) Can the conclusions reached be justified based on both the combined, pre-existing breadth of knowledge of the field and the work done in this paper?

There was a reason that everything in the introduction was necessary, and that the authors needed to be so careful about the work they did up until this point. It's all led up to this: the conclusions that can now be drawn in light of this paper's results, synthesized with everything else we know.

This, honestly, is where I -- for my own opinion -- think that peer reviewers need to be more diligent. Not because we need more uniform conclusions, but because we need fewer papers that draw grandiose conclusion based on a cherry-picking of historical and scientific facts. Authors should be free to express their professional opinions and assign their own weights to various findings, even if that supports a contrarian position. But authors should also be held accountable to at least mention the scientific findings that support the other side, particularly if the other side:

- is the mainstream/established consensus,

- contains well-known facts and arguments that the authors choose to overlook,

- or has recently published something challenging this point-of-view.

Science is a process and a discussion, and peer review is both a right and a responsibility. It's what's necessary to prevent "science" like the following.

For what it's worth, I wound up rejecting the paper I was called in to be the expert referee on, and I did so in particular because of the strong (and unreasonable) conclusions that it drew based on the lackluster evidence it cited. But I also pointed out which aspects of the paper were strongest, and what a version of the paper that would be acceptable (and scientifically interesting and valuable) would look like. When there is quality work that's been done, you should never belittle or berate an author simply on account of the portions of a paper that need changing.

But the peer review process is also our first line-of-defense against outrageous and unreasonable claims; it's what allows us to both discover and challenge scientific truths, as well as to present new possibilities.

Out of curiosity, was there an acceptable followup of the paper?

Excellent advice Ethan! I pay special attention to the "quotable" parts of the paper, and especially to claims made in the abstract and the beginning of the conclusions section. That is all many people will read! Make sure that _important_ caveats are not buried in the discussion section. I also de-snark my reviews right before I send them -- you want to make sure there isn't even the faintest hint of sarcasm.

Just FYI, the comic by "Nick" comes from this site:

http://www.lab-initio.com/index.html

excellent column, excellent advice. I'd add 1 thing, which is an extension to your "respect that" - especially if the paper comes from somebody who is junior, try hard to find positive things to say, and phrase comments in ways that lead to improvement.

Tor,

No; the paper was later submitted to a disreputable journal that does not do adequate peer review, where it was accepted. In the interest of keeping anonymity and confidentiality, I won't disclose any further information about that particular paper.

Nice quote from Isaac Asimov. But I can't find any evidence that he actually said it.

The first image is from Getty:

http://www.gettyimages.com/detail/photo/distance-learning-high-res-stoc…

Good points. When the process is working as it should, what we on the outside should see is the slow but inexorable building-up of small nuggets of facts bound together by cautious hypotheses, that gradually elucidate the natural world.

Sometimes it's maddeningly slow, but at this point in history we're privileged to be seeing progress made on a number of fundamental questions that really do have implications for our sense of where we stand in the universe.

For example (and re. your previous column on Earth-like planets), the first discovery of apparent life elsewhere will be a complete game-changer, allowing us to put a very rough (but none the less useful) number on something that for most of us is at most a fuzzy concept. Most of us reading this, probably expect that to happen within our lifetimes.

Looking back over the past fifty years, we've seen major theories in cosmology whittled down to a mostly-convergent picture, even though there are still plenty of puzzles to solve. And we've seen another key piece of the Standard Model supported.

Question: Why not create another section or partition in papers (outside of "conclusions/discussion"), where authors are free to speculate wildly about the implications and value of their work? It seems to me that giving those kinds of speculations a designated place, might also tend to enable stronger reinforcement of the norms keeping them out of the body of the work. Referees and reviewers could legitimately offer editing input to parse the material accordingly, reinforcing the partition.

I hope you realize that Sagan was giving an example of bad scientific thinking, not making the argument himself. The setup and the video title don't make that clear. Here's Sagan in context: http://www.youtube.com/watch?v=Cj5A0rKI0Ag

The cartoon is by Nick Kim, an environmental chemist, see http://en.wikipedia.org/wiki/Nick_D._Kim . Chemists seem to have somewhat more jaded views on peer review than physicists and cosmologists. See this article for an interesting read:

http://landshape.org/enm/peer-censorship-and-scientific-fraud/

It's pretty obvious that was what he meant since his summation is pretty glaring:

"Observation: Can't see the surface. Conclusion: There must be dinosaurs".

And given the text added afterward by Ethan about how the conclusion must be able to be drawn from the previous parts of the paper, pretty obvious that Ethan knows that too.

The only fly in that ointment is the caption on that youtube clip, but that would have been put there by whoever put that clip up. That person may have wanted to display how "the great science minds get it wrong all the time". Or they may have had a context for that that indicates they were not thinking that either.

"Question: Why not create another section or partition in papers (outside of “conclusions/discussion”), where authors are free to speculate wildly about the implications and value of their work"

That is, I believe, the purview of the "Letters" section. Which is why so many denialist idiots put their "peer reviewed papers" in the LETTERS section of, for example, Nature: they aren't peer reviewed but they DO appear in the "prestigious peer reviewed journal Nature" and, depending on the audience's expected knowledge can cast this as:

1) It's a peer reviewed paper in Nature! Take THAT, Warmistas!!!

2) It's a paper in a peer reviewed journal! Take THAT, Warmistas!!!

2) It was accepted as valid in the letters section of a peer reviewed journal! Take THAT, Warmistas!!!

Of course, they pick the characterisations in that order because they want to lie about the solidity of this "evidence" to big-up denial as being something "proper scientific".

Great blog! Like many, I am often pressed for time when reviewing papers so it's hard to think about how much the paper I am looking at probably means to the authors (years of work like you mention) and that it's not just another task in a very busy day. I think you make a great point about respect and we should always review papers like we wish others would review ours!

Looking on this in my role as an editor, you've missed out some important jobs. The job of a reviewer is to help the journal's editors decide whether to publish the work, and if it should be published, what changes should be made to improve the paper. Thus, you need to be able to provide some context to the work: is it within the scope of the journal? What does the work add to the scientific literature? How big an advance is it?

The first submissions of manuscripts usually aren't good enough for publication as they are, so editors need to know what improvements are needed, and whether and how the manuscript can be improved enough to be publishable in the journal.

Great post. It's good to be reminded that someone worked hard on their paper, even if it's not great.

Another important job of a reviewer is to discern whether the authors defined an appropriate research question. In some cases, this relates directly to the conclusions they drew from their work. But in others, an ill-defined research question or objective leads to a convoluted and difficult to understand paper.

Which is why so many denialist idiots put their “peer reviewed papers” in the LETTERS section of, for example, Nature

Nature is a special case here. They do have a section in which they publish reader correspondence, but they don't call that section "Letters". Instead, Nature uses that term the same way it is used in journals like Physical Review Letters: to denote a short, peer-reviewed article.* Most of the peer-reviewed articles published in Nature are in this section. It's possible that some of the people you refer to exploit the confusion in terminology to claim that their published correspondence (which, as you point out, is not peer reviewed) is a Letter (which is).

*I verified my memory on this point by looking up a Nature PDF in my library. The heading "Letters to Nature" appears at the top of each page of the PDF. At least that was their practice in 2001, the year this particular article was published.

I'm pretty sure that the review of the PRL isn't science-paper peer review. All it needs are some other fellows to say it has merit and it goes in if it gets selected out of the participants.

Interesting points about "Letters" and "letters." I'll keep an eye open for that sort of thing. There comes a point where the use of clever dodges like that should be treated as a form of overt fraud.

And sometimes those letters are selected based on having solely a "new idea" that is controversial and counters some known properties or laws.

This would be called trolling on the internet, but the original intent was to see if there was any change in the consensus and give fresh ideas (even though in almost all cases they're going nowhere).

I’m pretty sure that the review of the PRL isn’t science-paper peer review.

I've dealt with PRL. The Editors may reject some manuscripts without peer review as being unsuitable for that journal (I know this is routine at Nature and Science), but the manuscripts that pass that hurdle do get sent out for peer review. There is a certain amount of luck-of-the-draw in who you get for referees, but I have found that to be the case for other journals I have submitted to, and I have no reason to think the quality of the reviews is significantly worse than at those other journals. There is also a general habit in journals whose title contains the word Letters to make the review turn-around time short; i.e., papers that would require major revisions are rejected, which is not necessarily true of other journals. I don't have direct experience with Nature or Science, but my understanding is that review in those journals differs from PRL mainly in the degree of editorial triage (those journals are specifically looking for papers they expect to be heavily cited, so only a minority of manuscripts are sent out for review).

Science follows the practice of newspapers and general-circulation magazines in the US of calling the reader correspondence section "Letters". What Nature and PRL call "Letters", Science calls "Reports". This discrepancy may well add to the confusion.

Well, it's leaving me confused.

More coffee needed...

Ta :-)

hmmmmmmmm good

This post has troubled me.

I understand the need for peer review and moderation of papers before publication; but...

My concern is how we learn science and how we learn to be science minded.

Ethan's blog is a welcome science place. First it teaches the best astrophysics that Ethan knows to anyone curious. Second, it pretty much encourages any on topic discussion.

But off topic discussions don't belong here because they disrupt or confuse and are not the point of the blog. And I agree.

But the point that I am coming to is that to learn science and to learn to be science minded requires not only that you study and learn the best science as currently known; but that you also (in my opinion) develop your own learning hypotheses (yes and then test them with logic against theory and experiment).

For example as I am studying any book (on general relativity or politics) I am continually both digesting and questioning and building my own hypotheses.

Now my concern is; where does the sincere science minded person who is not a professional discuss his necessary learning hypotheses.

Now Ethan is proud that he did not approve the publication of an imperfect paper. Well, OK. And the author did have another place to publish. And to me this is very important; because whether experiment or theory is imperfect or not, is not as important as the fact that the author of whatever experiment or theory is willing to engage in scientific discussion. And presumably such an author is willing to listen, though not necessarily agree with feedback (e.g. Ethan's opinion that such a paper as is, is not publishable.)

Well how do we encourage science mindedness; when we discourage the efforts of sincere observers, experimenters and theorizers of science.

Charles Darwin, Gregor Mendel, Albert Einstein and other great scientists did great work outside of the mainstream science establishments of their time. Others, Subrahmanyan Chandrasekhar comes quickest to mind, had their careers nearly destroyed by great work that was mocked by the leading peer scientists of the day. Chandrasekhar's disagreement with Eddington is famous; it nearly destroyed Chandrasekhar's career as a scientist; he left the field of black hole for at least 20 years. The field of black holes was killed by Eddington (such was Eddington's authority) until he died 20 or so years later.

As Chandrasekhar said, "With (Bertrand) Russell presiding, )sir Arthur) Eddington gave an hour's talk, criticizing my work extensively and making it into a joke... I don't think that there was any doubt in anybody's mind in those days that Eddington was right, by virtue of Eddington's extraordinary dominance... I did a lot of work on white dwarfs at that time, which, because of the controversy with Eddington, I did not publish... Eddington told me that so far as he could see, there was not much scope for my getting a position in England. And he thought that going to America would be useful to me." etc from Oral history of physics transcript. Of course, Chandrasekhar was correct and Eddinton wrong.

So what of the not so great science minded folks like you or I? How do our sincere science minded efforts fair?

Well, out here on Ethan's blog we can certainly ask our question and may receive an answer or not. And "not" is OK.

Our ideas may be resisted with physical logical reasoning. Which is also OK.

So if you or I have ideas, that we think are scientifically important; how or where can we publish them. Even if we are not able to take them to that next level of professionalism. Where can our experiments, observations and hypotheses be discussed in terms of their scientific merit or possibility?

I'll continue this discussion, for those who are interested at

http://scienceblogs.com/startswithabang/2012/09/23/weekend-diversion-yo…

Ethan's place for off comment discussions.