“I knew I was alone in a way that no earthling has ever been before.” -Michael Collins

Less than a decade after the first human was launched into space, astronauts Neil Armstrong, Buzz Aldrin and Michael Collins journeyed from the Earth to the Moon. For the first time, human beings descended down to the lunar surface, opened the hatch, and walked outside. Humanity had departed Earth and set foot onto another world.

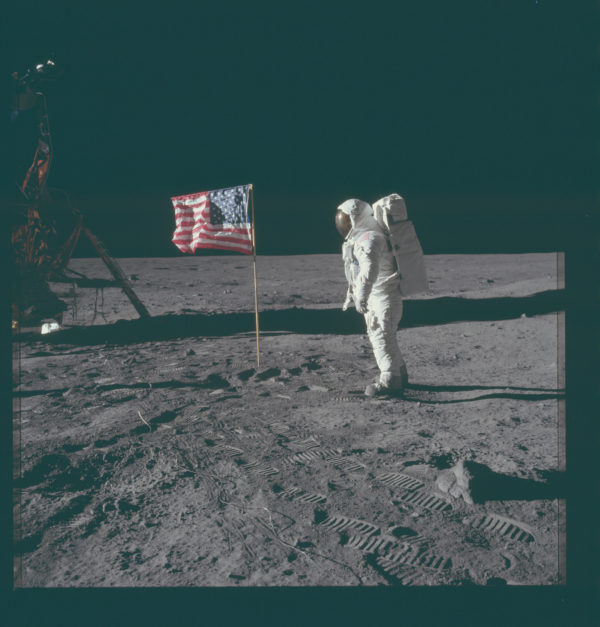

Buzz Aldrin having just planted the first American flag on the surface of a world other than our own. Image credit: NASA/Apollo 11.

Buzz Aldrin having just planted the first American flag on the surface of a world other than our own. Image credit: NASA/Apollo 11.

While Armstrong and Aldrin walked on the surface, collecting now-iconic photos, deploying science instruments and returning hundreds of pounds of lunar samples, Michael Collins orbited overhead, embarking on a missing that no human being had undertaken before. Forty-seven years later, humanity has never had a bigger breakthrough as far as crewed space exploration goes.

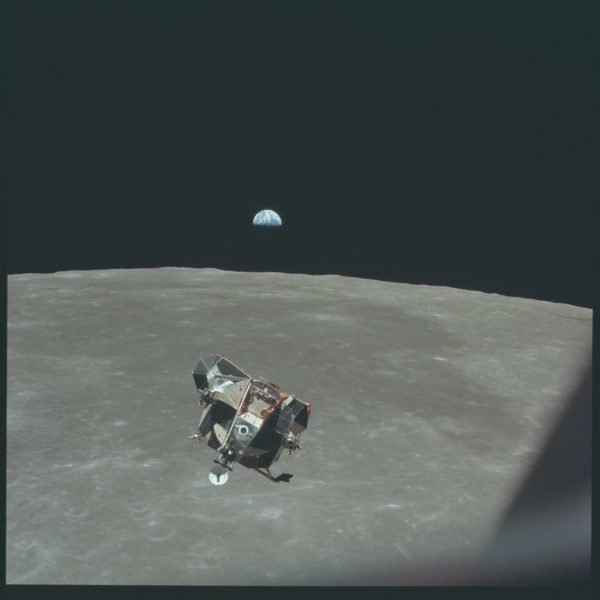

The returning Lunar Module, with astronauts Armstrong and Aldrin inside. Michael Collins is the only human not contained in this photo. Image credit: NASA/Apollo 11.

The returning Lunar Module, with astronauts Armstrong and Aldrin inside. Michael Collins is the only human not contained in this photo. Image credit: NASA/Apollo 11.

Go get the whole story -- and watch an amazing video reliving the journey -- today!

...and hopefully we never will.

Most simply don't understand how nasty space is to humans. Even with what shielding they could provide the lunar astronauts they still absorbed 2 rems of radiation in a few hours by crossing the Van Allen belts. They absorbed several more by being outside the Earth's magnetosphere.

Anything over 5 rems in a year is considered dangerous and the lunar astronauts exceeded that dose in under 100 hours. Space exploration means going farther. Technology isn't the limitation. We are. Humans are simply not built to survive that environment. Sending humans to explore Mars, Jovian and Saturnian satellites, or even of worlds beyond our Solar System is a death sentence and leukemia is an ugly way to go.

I love me some space exploration, but send robots to do it.

Dealing with the environment is a big part of exploration. As we learn to protect ourselves against the elements, we progress. I feel our engineers are advanced enough to prevent most negative outcomes.

The further we reach into space, the more necessary it becomes to react instantly to any onboard crisis. Robotics can only achieve limited control from our programming of the operatives. High level decision making is not a part of robots, per se (maybe for androids of the future, though).

:)

@ # 1 & 2

I think Deinococcus radiodurans is something that has to be more researched to be able to develop living things that can do our bidding for space exploration.

However, we really need to figure out the travel part first. Worm holes, bending space etc.. something has to give to here knowledge wise to allow us more interstellar travel options other than optic.

Rather than trying to 'educate' it, how about applying it as a radiation proof layer on the skin of the craft in which we can travel.

Or fix the errors with nanobots, increase our DNA so that where cold blooded creatures use DNA information to code for development paths in different weathers (we mammals have temperature control and therefore jettisoned much of our genetic information as no longer necessary), add DNA to form proteins and other signals to repair damage to our cells from radiation.

Tardigrates are extremely resistant to radiation damage.

@Wow #5

Nanobots also don't do well in space. Radiation wreaks havoc on semiconductors. Virtually all computers in space right up through the Space Shuttle had to use magnetic core memory, a technology that was abandoned everywhere but space in the mid-70s, just because it could store information without using semiconductors. Magnetic Core Memory is how the Voyagers have survived nearly 40 years in space. New probes today just use a LOT of redundancy in semiconductors to continually rewrite the damaged memory. There isn't enough room on a nanobot for either the necessary redundancy in semiconductors or magnetic core memory.

But a broken Nanobot

a) is easily replaced, just make another one

b) is much much more resistant to damage than even tardigrades

c) don't go ape when their function breaks: they stop working Smacking your computer with a sledgehammer will damage it, but it won't then start a global thermonuclear war, because it never had that feature to begin with.

Modern satellites use error correction algorithms, much like flash memory does, to protect them against failure modes in space. Computers in space tend to be 20 years out of date because it takes a decade for it to be tested enough.

Moreover, miniaturization makes it much more likely that a "modern" chip would get an error rate. A cosmic ray may cause 10 electrons to move into the conduction band, but the 386 had millions of electrons to denote a "1", whilst modern lithography means the difference between a logic 1 and 0 would be of the order of 10 electrons or so.

Oh, nanobots don't respond to a C program (nor even machine code), they're far too small to be a "nano scale IBM PC Compatible".

Nor do they have to be.

RNA make the right proteins not because they run a program but because electrical potentials set up cause one molecule to be attracted and only one type of molecule will lock on, furthering the process to the next base. If it's not the right one, then the mechanical work done by the attachment will not progress the system to the next base and eventually the molecule attracted will be shunted off and clear the way for another molecule to be attracted.

No programming.

We don't need to use programs to make nanobots do their work either. Just construct them to do the work designed.

@PJ #2

While true in the past, we’re already beyond that. In today’s complex problem, machines are not programmed. Depending on the method of method of machine learning (Deep Learning, SVM, Random Forest, etc) the machine only has to be given a method of scoring or a practice set to learn from.

An interesting example of unsupervised learning (just given a scoring method) is this one of a robot figuring out what it itself is and how to use its form to walk across the floor.

https://youtu.be/iNL5-0_T1D0

In the category of supervised learning and speaking specifically to your point of machines not making high level decisions, there is the example of AlphaGo and Move 37 of Game 2 in the match against Lee Sedol.

http://www.wired.com/2016/03/two-moves-alphago-lee-sedol-redefined-futu…

Today machines can make decisions in situations they have never seen before and they can adapt to carry out whatever mission they’ve been tasked with. It requires some fairly substantial computer power right now but in 20-30 years your smartphone will be smarter and more creative than any human who ever lived. For the weight of an astronaut and all the life support machinery you could send 50 smartphone guided rovers all hardened against the nasty environment of space.

There is no point to subjecting humans to the deadly environment of space especially when sending a human means you get even less scientific data back than if you had sent lighter and more robust autonomous probes.

@Wow #8

doh! blockquote fail

"Satellites and Space Exploration Probes are different animals."

But all animals, even if hugely different from each other, use prions to "error correct" protein building.

"Even the high flying geosynchronous satellites are only ~36,000 kilometers up whereas even the compressed leading edge of the magnetosphere is 65,000 kilometers up."

Irrelevant. The height doesn't change whether error correction algorithms can be used. Nor does it change how we build nanobots.