“There are two types of people who will tell you that you cannot make a difference in this world: those who are afraid to try and those who are afraid you will succeed.” -Ray Goforth

We've really investigated some amazing scientific stories this week here at Starts With A Bang! There's always so much to consider, think about and enjoy, and I'm already looking ahead to what's on the plate for this week: a new podcast to put together, progress on designing and constructing our timeline-of-the-Universe poster, and putting the final touches on my upcoming book, Treknology! In fact, I had an incredible chance to talk about it with Richard Jacobs on the Future Tech Podcast, which -- if you have 36 minutes -- you should take a listen to, as there's so much we explore!

We've had a pretty incredible week of stories, of course, and if you missed anything (or want a second look), here's the recap:

- How many black holes are there in the Universe? (for Ask Ethan),

- The scientific story of how each element was made (for Mostly Mute Monday),

- How much CO2 does a single volcano emit?,

- We're way below average! Astronomers say Milky Way resides in a great cosmic void,

- New LIGO signal raises a huge question: do merging black holes emit light?, and

- Can moons have their own moons?

So it's been a typical week: I've written six new articles, and you've responded with nearly 100 comments for me! What else does that inspire? Let's find out in our comments of the week!

Image credit: Keck / UCLA galactic center group / A. Ghez et al., via http://astro.uchicago.edu/cosmus/projects/UCLA_GCG/.

From Paul Dekous on the orbits of stars around our galaxy's central black hole: "...why aren’t those orbits more coplaner like the planets in our solar system, should’t those stars align much more if there was indeed a massive object tying everything together?"

This is a very interesting question, but let me ask you a question to get you thinking in the right direction: why are the planets in our Solar System all in a plane, but the Oort cloud objects have a diffuse, spherical distribution? Or why is our galaxy mostly a disk, except with a bulge in the center?

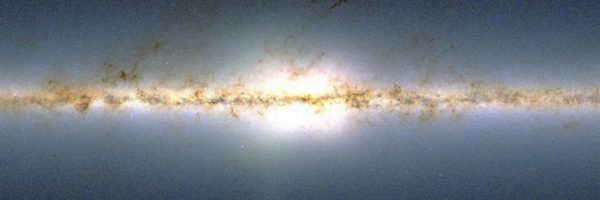

The SDSS view in the infrared - with APOGEE - of the Milky Way galaxy as viewed towards the center. Image credit: SDSS / APOGEE.

The SDSS view in the infrared - with APOGEE - of the Milky Way galaxy as viewed towards the center. Image credit: SDSS / APOGEE.

It's because the "disk" part comes about when you form a structure: something that gets pancaked or funneled into a particular shape based on what's energetically favorable. But over time and through chaotic interactions, or because you were never subjected to the forces that made a disk in the first place, you obtain random properties that allow you to be distributed more like a "swarm." The interactions that give rise to stellar orbits around the galactic center are much more a part of the latter category, and that's why they -- like elliptical galaxies, our bulge, or the objects in the Oort cloud -- have somewhat random distributions in terms of their orbital axes.

The simulated decay of a black hole not only results in the emission of radiation, but the decay of the central orbiting mass that keeps most objects stable. Image credit: the EU’s Communicate Science.

From Denier on a view of quantum gravity and where he's in error: "If Classical Physics held true all the way down you’d be right on the money but Quantum Gravity is the probabilistic movement of particles and has nothing to do with the warpage of space-time. With Quantum Gravity there is a direct outward path through space from the center to the horizon."

I think this is where the error arises, but you must recognize that I'm extrapolating from quantum field theory for the other forces because we don't have a quantum theory of gravity. The way quantum electrodynamics works, for example, is that you consider all possible paths a particle could take, integrate over them weighted by their probabilities (no matter how infinitesimally small), and you arrive at the total probability and amplitude of a particle's distribution. But what you're describing as a "direct outward path" should be a path with exactly zero probability, meaning it won't contribute anything at all.

There is a physical limit in space and time of "wavefunction spreading" which is defined by quantum operators, which don't simply allow you to get "any result at all" like you might intuit. Even virtual particles can't do anything at all, like you will them to. They are still bound by the laws of physics, even if their existence is calculational/mathematical in nature.

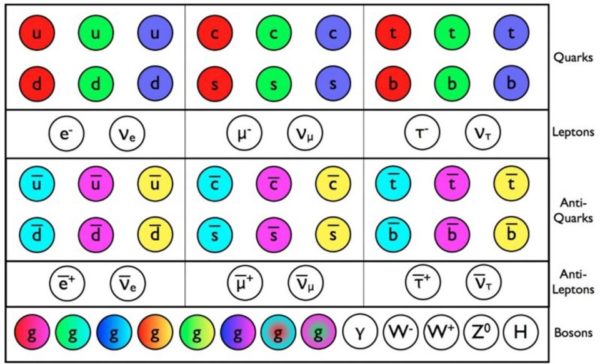

The known particles and antiparticles of the Standard Model all have been discovered. All told, they make explicit predictions. Any violation of those predictions would be a sign of new physics, which we're desperately seeking. Image credit: E. Siegel.

The known particles and antiparticles of the Standard Model all have been discovered. All told, they make explicit predictions. Any violation of those predictions would be a sign of new physics, which we're desperately seeking. Image credit: E. Siegel.

From Elle H.C. on a new idea of particles: "A wave is like a Spring, a compressed spring is harder, but it is still the same spring, moving along or against the grain. So what you’re measuring could be different compression states of the same spring, and not different particles."

This is actually a super old idea, and the idea that higher massed-versions are simply "excited states" or resonances of lower-mass ones have been ruled out through experimental particle physics. It isn't to say it's a bad idea; it's not, in principle. But it isn't how our Universe works, and if you don't believe it, try explaining how a muon decays (and doesn't decay) with it; you can't.

Although we've seen black holes directly merging three separate times in the Universe, we know many more exist. Here's where they must be. Image credit: LIGO/Caltech/MIT/Sonoma State (Aurore Simonnet).

From Omega Centauri on stellar-mass black holes vs. (super)massive black holes: "So the total mass of stellar BHs may be comparable to the total mass of MBHs. This either implies that perhaps half of stellar mass BHs have been absorbed by MBHs, -or that MBHs have another major growth mechanism beyond eating stellar mass BHs?"

I am just assuming that when you say "MBH," you mean "SMBH," for supermassive black hole, and not "micro-black holes," which I will not address here, now, or in the foreseeable future due to the number of times I've addressed them and their lack of existence.

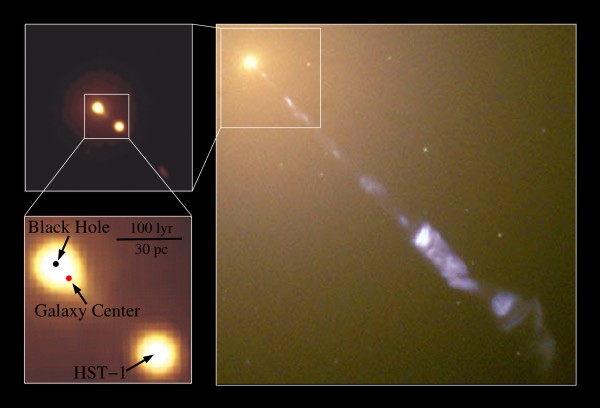

Image credit: NASA and the Hubble Heritage Team (STScI/AURA), J. A. Biretta, W. B. Sparks, F. D. Macchetto, E. S. Perlman.

Image credit: NASA and the Hubble Heritage Team (STScI/AURA), J. A. Biretta, W. B. Sparks, F. D. Macchetto, E. S. Perlman.

So what we expect is that on average, you expect somewhere around 1/1000 stars that form to create black holes, but that this is heavily mass-weighted, so that perhaps a few percent of all the stars that form by mass wind up creating black holes. If we take a look at supermassive black holes, the largest black holes (like the 6 billion solar mass one in M87) come in the galaxies with the largest stellar mass populations (M87 has roughly 10^13 solar masses in stars), while our galaxy (with more like 10^11 solar masses in stars) has only a 4 million solar mass black hole. If we just take those two numbers, we find that there's roughly a factor of about 100-1000 difference between the total mass of stellar black holes and the mass of a supermassive black hole in galaxies. It isn't close to half; it's more like 1:100 to 1:1000.

Don't rush to implications just yet!

The most current, up-to-date image showing the primary origin of each of the elements that occur naturally in the periodic table. Neutron star mergers and supernovae may allow us to climb even higher than this table shows. Image credit: Jennifer Johnson; ESA/NASA/AASNova.

From Michael Kelsey on making the light elements: "The main figure (annotated periodic table) shows exactly this: Lithium is mainly yellow (“dying low mass starts”), with most of the rest the pink corner (“cosmic ray fission”, or what I would have called spallation), and a thin strip of dark blue (“big bang fusion”) for the primordial Li-7.

For beryllium and boron, both have only single stable isotopes, which makes their production tricky. B-10 is the daughter of Be-10 decay, but Be-10 itself is hard to produce — neutron absorption onto stable Be-9 produces alphas (Be-9(n,2n)Be-8*, Be-8* -> 2a), so you won’t get Be-10 in stars."

There are a few way to make the rare elements lithium, beryllium and boron, but most important isotopes are unstable, and the elements you do make are easily destroyed. The Universe is full of hydrogen (normally H-1) and helium (normally He-4), where isotopes like deuterium and He-3 are stable, while tritium (H-3) is long-lived. But there are no stable mass-5 or mass-8 nuclei, so you can only make lithium-6 and lithium-7 (or beryllium-7, which decays to lithium-7 on long timescales) out of them. But lithium-6 and lithium-7 are easy to blow apart in stars, so you not only don't do well to make them there, if you get pre-existing Li-6 or Li-7 and it makes its way into a star, it's not going to last long.

So you can get a little help in the final stages of red giant stars, which is what "dying low mass stars" that produce planetary nebulae are for lithium, but beryllium and boron can't do even that. As Michael Kelsey alluded to, you might think, "oh, I know Be-9 is stable and I can start with a bit of that, and then hit it with a neutron, making Be-10 which decays to stable B-10." It's a good idea, since you get free neutrons in stars all the time, and Be-9 isn't super-easily destroyed like lithium is. But there's a problem when you add a neutron to Be-9; you don't get the ground state of Be-10, but an excited one: Be-10*, which immediately emits two neutrons in response. The daughter product, Be-8, then only lives for ~10^-16 seconds before decaying into two He-4 nuclei, and that's the end of boron and beryllium. So far, cosmic rays are the only way we know how to get there.

Understanding the cosmic origin of all the elements heavier than hydrogen can give us a powerful window into the Universe’s past, as well as insight into our own origins. Image credit: Wikimedia Commons user Cepheus.

From eric on the highest elements of all: "It would be great if Walt Loveland (or a collaborator) could update The Elements Beyond Uranium. I was going to recommend it to folks who wanted to learn more about the discovery of the man-made elements…then I realized that it only covers about 15 of them, and there are now 24. So it misses a full third!"

This is one of my favorite things about science: the ongoing process. When I was a kid, the periodic table went up to 106, provisionally, and people argued if there was an "island of stability" somewhere higher up there, while Bismuth, element 83, was the heaviest stable element. Now? We're up to 118, inclusively, which is a big deal because 119 starts the next row of the periodic table! We're almost up to the "eighth period," and element 126 is where that supposed "more stable" isotope would be. If we're lucky, and if we're ingenious enough, we'll be able to test that out. Oh, and bismuth? It decays after ~10^19 years, a billion times longer than the age of the Universe. Wait long enough, and it will all become lead.

When volcanoes erupt, a large amount of material from the Earth's interior, including extraordinary amounts of carbon dioxide, are released into the atmosphere. Image credit: European Geosciences Union.

When volcanoes erupt, a large amount of material from the Earth's interior, including extraordinary amounts of carbon dioxide, are released into the atmosphere. Image credit: European Geosciences Union.

From CFT on a little bit of climate misdirection: "Oh Nooooooooooes!! Quck, everyone, stop breathing while there’s still time!

Too bad Ethan didn’t mention the number one greenhouse gas. Water vapor. Damn. Too bad our planet is 71% covered with water and the damn sun keeps evaporating it."

"The pressure allows water to exist in the liquid phase, and the heat-trapping clouds and gases like water vapor, methane, and carbon dioxide give us the warmth necessary to have oceans. Carbon in particular is a tremendous part of our planet; it's the fourth most abundant element in the Universe, the essential element for organic matter, and – other than the Sun – is the most important factor in determining Earth's temperature. It's also the essential element in two of the three major greenhouse gases playing a role in our temperature, with water vapor varying tremendously based on other factors. But most of that carbon is sequestered not in the Earth's crust, but deep within the mantle."

But that's not even the point. The point is that this article was about how much CO2 volcanoes emit and how that compares to the CO2 that humans emit. And that was handled extremely accurately. You clearly don't like the conclusion, but you're going to have to get used to it. The world doesn't care about your counterfactual conclusions; it simply does what physical science demands of it.

Hundreds of active and dormant volcanoes worldwide, like the ones shown here in Kamchatka, continually degas and emit CO2. Image credit: Cosmonaut Fyodor Yurchikhin / Russian Space Agency Press Services.

From SteveP on how he sees the climate wars of today: "The tendency of simians to band together in tribes, teams, and clans with common misperceptions is a fascinating part of the human experience. Among the cultural values of the Fossil Fuel tribe ( the FoFu), for instance, is the belief that anyone without the same stripes as them is a foe, so an outsider trying to help the FoFu understand their existential predicament is a little like a naturalist trying to warn a badger away from a leg hold trap.

Despite their acquisition of language and reasoning skills, the FoFu are a puzzle. They have yet to realize that a near ubiquitous, persistent, steadily accumulating , acidic, non-condensing infrared active waste product that they are actively creating is poised to wreck their comfortable niche in the universe. Whether or not they can be alerted to the problem in time to avert disaster is a question. Is anyone out their fluent in FoFu?"

I think it's a lot more than that, SteveP. There was a very prescient book called Culture Wars (pick it up!) that was written in 1991, and I think we're seeing the endgame (or maybe not; maybe just the middlegame) playing out today. It's a question of identity that I think is at the core of this conflict. How do we define ourselves? Who do we think is "like us" or different from us, who is on "our side" versus who isn't, who is "fighting the good fight" and who's a villain in the story of our world today?

When we think in these terms, we lose the ability to look at the pros and cons of an argument. We lose the nuance. We lose any pretense of objectivity that we had. And it's something we all have to fight against by asking questions and being open to new information. I spent most of yesterday at a Sheriff's workshop on civilian responses to public threats, ranging from lone gunmen (it's 98% men who are shooters) to knife-wielding maniacs to bombers and more. You might not think there'd be a bunch of lefties in the workshop, but there we were. Regardless of where you stand on a variety of political issues, this world is all of ours, and it's up to each of us to contribute to making it better.

The construction of the cosmic distance ladder involves going from our Solar System to the stars to nearby galaxies to distant ones. Each “step” carries along its own uncertainties; it also would be biased towards higher or lower values if we lived in an underdense or overdense region. Image credit: NASA,ESA, A. Feild (STScI), and A. Riess (STScI/JHU).

From Anonymous Coward on the cosmic distance ladder: "Is it possible to measure the Hubble constant using supernovae or a Cepheid variables from galaxies outside the KBC void?"

Supernovae? Yes. Cepheids? Nope. In fact, supernovae are really the only standard candle that serves as a distance indicator that can take us beyond the KBC void. The whole point is that we may be biasing ourselves towards higher values of H by being situated where we are. If this idea is correct, we should be able to determine this with future surveys that get large numbers of distant supernovae like Euclid, WFIRST and the LSST. I am hopeful.

Galaxy Messier 77, which may be quite similar to our own. Image credit: NASA, ESA & A. van der Hoeven.

Galaxy Messier 77, which may be quite similar to our own. Image credit: NASA, ESA & A. van der Hoeven.

From chris heinz on our likelihood and location: "Our solar system is in a relative void too: the outskirts of a spiral galaxy. between arms. Antropic principle maybe selects for voids to support life because of lower radiation levels?"

We're not that far towards the outskirts; we're about halfway between the edge of the arms and the center. We're on a spur of one of the arms, so not exactly between arms, and that situation is temporary, as stars move in-and-out of the arms. But why would you think we need a void to support life, or that elevated radiation levels would be dangerous to life on a planetary scale? Our Sun's protective effects and the Earth's magnetosphere do a wonderful job of protecting us from cosmic rays, and there's no reason to believe they wouldn't also be sufficient anywhere else in the galaxy. Don't assume we need to resort to anthropic arguments when there may not even be a problem or puzzle here.

The simulated large-scale structure of the Universe shows intricate patterns of clustering that never repeat. But from our perspective, we can only see a finite volume of the Universe. What lies beyond this edge? Image credit: V. Springel et al., MPA Garching, and the Millenium Simulation.

From JonW on what makes an average density to the Universe: "Is “average” density defined via random sampling of galaxies (observers) or random sampling of points in the universe?"

Neither. It's a random sampling of volumes in the Universe, which allows us to determine the overall average as well as the variation from that average on any scale we look at. Small scales have larger magnitude variations; large scales have small magnitude variations.

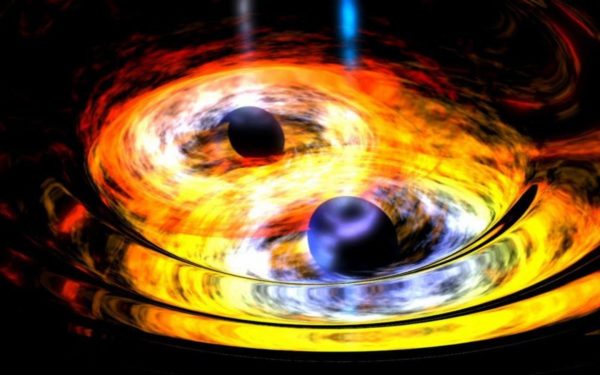

Artist's impression of two merging black holes, with accretion disks. The density and energy of the matter here should be insufficient to create gamma ray or X-ray bursts, but you never know what nature holds. Image credit: NASA / Dana Berry (Skyworks Digital).

Artist's impression of two merging black holes, with accretion disks. The density and energy of the matter here should be insufficient to create gamma ray or X-ray bursts, but you never know what nature holds. Image credit: NASA / Dana Berry (Skyworks Digital).

From Sinisa Lazarek on why light could be delayed: "if both EM waves and grav. waves propagate at the same speed, why a delay of 19 hours?"

19 hours ought to be too much; we don't think that's likely. But! Sometimes you make signals that take some time to travel through a medium or through matter. Light from a supernova needs to travel through the outer material of the progenitor star, and so there's a delay there. Light from other sources may need to travel through other media or matter, and so may have its arrival time delayed in a way that gravitational waves do not. But 19 hours is probably too much.

A blazar coming from the same region of the sky where ultramassive black hole OJ 287 resides. Images credit: Ramon Naves of Observatorio Montcabrer, via http://cometas.sytes.net/blazar/blazar.html (main); Tuorla Observatory / University of Turku, via http://www.astro.utu.fi/news/080419.shtml (inset).

From Denier on visualizing a blaze of fantastic radiation: "What would it look like to the instruments if a black hole jet was pointed at us for a fraction of a second?"

We don't need to imagine; these objects are known as Blazars and exist in some significant abundance. Most AGNs (which arise from black hole jets) aren't pointed at us, but a few are!

Of course, a Kuiper belt object would need to have a moon with its own moon to be considered a moon having a moon. The distances at play would likely need to be very great; at some point, the gravitational binding energy becomes very small and the region you have for success is extremely narrow. Image credit: Robert Hurt (IPAC).

From Anonymous Coward on whether moons could have moons: "To have a stable moon with a moon system requires finding a stable solution to the three-body problem, which is the classical example of a chaotic dynamical system."

Well, let's not go that far. When we say "stable," we don't mean 100% stable on all timescales permanently forever and ever, which we never have. In 10^150 years, the Earth would spiral into the Sun due to gravitational radiation, even if Earth and Sun were the only two masses in the Universe. But we consider our Moon orbiting Earth orbiting the Sun to be stable, because it's stable on the timescales of the Solar System. If a moon of a moon only lasts a few million or even a few hundred million years, it isn't a good deal for the Solar System.

Mars' Phobos might be interesting, but there was probably a larger, third, inner moon that was created when Phobos an Deimos were, but it's already fallen back onto Mars. Phobos is likely next, but Deimos might be stable, now, after all.

Jupiter with its four largest moons. Image credit: Mike Hankey, via http://www.mikesastrophotos.com/planets/amateur-astronomer-strikes-agai….

And finally, from Tom P. on what a moon of a moon would need to survive: "In summary, it sounds like you are saying there’s no reason a moon couldn’t have a moon but it probably couldn’t hold on to it for very long. Is it possible to make an order of magnitude estimate of how long the more likely satellites could keep a moon?"

If you're talking about something a few thousand or tens-of-thousands of kilometers away, a moon could theoretically last billions of years around Callisto, the outermost Galilean moon of Jupiter. If you can steer clear of having your orbit perturbed too severely by Ganymede, you ought to be good to go for a very long time, but that may be the limiting factor. We also don't know the extent of Callisto's atmosphere, as it's never gotten a good mission to go by it. Billions of years are plausible for Triton as well, but our own Moon, honestly, may be a better candidate than Triton for gravitational regions of stability.

It's not as much a question of "could," though, as it is a question of "what do we actually have." If I gave optimal orbital parameters for a hypothetical moon around one of these existing moons, I could make it work. But did nature make that? That's the question we still don't know the answer to.

Thanks for a great week, everyone, and see you back here for more next time!

That argument is still alive! The transactinides discovered so far are well "to the left of" (i.e. neutron poor) the island of stability We have yet to figure out whether a high neutron value of the elements between about 110-130 would lend them more stability, because until someone comes up with some new idea, we don't know a good projectile-target reaction that will reach that island. But I have good confidence that eventually, someone will get there and give us some empirical estimate of just how stable that island is.

@Ethan

Well of course if your only way to find something out is by smashing things together you're not going to find new physics and learn how stuff works.

Imagine discovering how DNA is build by smashing strings of DNA against each other with brute force, you could have kept doing that for ever and not learning one single thing. It was X-ray crystallography that gave insight into the double helix, and its spiraling spring-shape.

In the same way Quarks could be DNA-like springs but by heavily smashing them into each other they keep on collecting meaningless bits and pieces. This method of doing science is idiotic.

@ Elle

"This method of doing science is idiotic."

what would you propose as an alternative? We can't photograph quarks. Don't get me wrong... am not saying one method is the only method. But it's the current lack of something better. If you can't show something better, then you're in the same idiot camp as the rest of us.

p.s.

You could apply that same attitude and say that the way we launch things in space is idiotic. It's based on chemical reaction, it's extremely inefficient, costs a ton of money, can take only a miniscule amount of total weight as cargo... etc etc..

But we don't use rockets because we think they are the best thing there ever will be... we use them because even after half a century, all of the earths engineers and scientists couldn't come up with anything better. Again, not saying to stop looking and settle for this forever. But we use the things we do, not for the lack of trying and looking for new things, but for the lack of finding better things.

And this basically applies to every field of science or physics... theoretical and experimental. It's easy to diss something in retrospect and requires no real effort, especially when flaws are discovered and known. Coming up with something better is hard.

@SL

Well there is the new XFEL project but I guess that's not refined enough, and more for molecules. On the other hand there was the recent article by Ethan to 'quietly' observe decay. What about fixating an atom and shake it and observe how it vibrates back more like an echocardiogram with waves, compare with cooled atoms … you know the more subtle approaches. Plenty of smart things to try.

@ Elle

XFEL is certainly a great project, but like you said it's for molecules. They are doing nano meter and pico meter observations. At those scales, although QM effects do come in play occasionally, things still are still "things".

Quarks on the other hand are waaaay waaay smaller than that. Standard model tells us that they don't actually have a size. So thinking about them in sense of balls or anything with volume might be counter productive. From ZEUS/HERA colaboration:

"..the radius of the quark is smaller than 43 billion-billionths of a centimetre (0.43 x 10−16 cm). That’s 2000 times smaller than a proton radius, which is about 60,000 times smaller than the radius of a hydrogen atom, which is about forty times smaller than the radius of a DNA double-helix.."

Note the beginning... "smaller than..."

And I don't really think doing anything with atoms (like cooling) is going to give deeper insight into something which is almost 100.000 times smaller. It's like doing an experiment on the whole planet in order to decipher something which is several football fields big/small.

But again, you ought to think about it in terms of fields and energy, not as 3D things. Same as electron.

@SL

So what, that's 1mm vs 1m if you consider the radius, and above all there are 3 of them interacting and shaking things around. You should be able to track a lot of the action and find motion patterns, something you'll never get by smashing things apart.

Elle,

Your supposed counterexample regarding DNA structure is not even a counterexample at all. We DID find out the structure of DNA by smashing things into the DNA molecule. Those things just weren't other DNA molecules, but rather X-ray photons. AFAIK, (and someone could provide a REAL counterexample if I'm wrong), ALL observations we have ever made have been done by smashing things into each other. Even going to a window and looking out upon the scenery outside is an observation based on smashing photons into the photoreceptors in your retina. Smashing things together seems to be just about the only thing we can do to find out about the universe. Fortunately, it's worked pretty well so far.

BTW, Elle, I think one problem that you fail to grasp is that probing to smaller and smaller distance scales inherently requires higher energies. Getting to small enough distance resolution to deal with the quark structures will inherently require energies that are sufficient to have a significant effect on the structure that you are attempting to study. Like I stated above, to make an observation, you must scatter some particle (or wave, at the quantum level, there really is not true distinction between the two) off the object being observed. As one example, suppose you plan to use photons to do the observation. You direct a photon at the structure and see how it interacts. The distance resolution is limited by the wavelength of the photon. You cannot probe distances smaller than the wavelength because of diffraction. So, then, use a smaller wavelength, you might say. That's certainly possible, but the energy of a photon is equal to hc/wavelength, so smaller wavelengths mean larger energies.

AFAIK, there's no escaping this limitation by using other particles. ALL particles have a wavelength (remember particles and waves at the quantum level are not really different). The wavelength of a particle is given by h/p, where p is the momentum. Rearranging gives p = h/wavelength, so the smaller the wavelength, the larger the momentum. But larger momentum also implies larger energy, so the energy increases in this case too.

@Sean T

Exactly.

At some point you start to need something else, to learn something new.

Sure they crumbled DNA to figure out which nuclei were present, but only by using the smaller X-rays they were able to take a crucial step forward. There is a magnitude of difference between the DNA molecule and the X-rays that was the key.

If they didn't had the X-rays they could have kept toying with DNA forever, just like what the LHC is doing now, a bit more energy here and there, but you are not making any significant progress with this method and never will.

@ Elle

I think your main problem is you think of a proton as being a rattle toy. It's not. Quarks, gluons etc.. are not solid objects. They are packets of energy. The measurement above (less then 0.4 x 10^-16) is not a measurement of quarks size.. it's setting an upper limit. Meaning, it's how far we can probe... and that scale, we still don't find any "structure". Meaning.... it might be 10^-32 for all we know. Like I wrote earlier.. Stand. model says they don't have any size at all. No volume.. thus nothing is shaking... it's eneergy bound by other energies.

@SL

This upper limit is for finding some inner-structure of the Quarks, while I was referring to taking a step back and looking more precisely at how things behave within a Proton by listening to its beats rather than smashing things apart.

We already know into what everything decays that is in a Proton, and from UHECRs we know that nothing significantly new parts will pop up. It is not like the LHC is boldly going were no proton has gone before.

Do you believe that if you keep on hitting the same nail every year a little harder that one day it will turn into gold?

Elle:

I think Sinisa's point in @4 is still relevant: I don't think there are any nonkinetic techniques for exciting subatomic particles to energies where we can "listen" to what they're made of. I'm sure if you or other people were to develop some, physicists would use them...heck, such a thing might win a Nobel prize, particularly if it was cheap and easy. But until someone comes up with such a technique, accelerators it is.

Like I said, I know of no observation that DOESN"T involve hitting one thing off of another. When trying to look at very small things, the nature of the universe is such that we must use a "smasher" that has high energy, generally high enough energy to affect the thing we're trying to observe.

Elle, you want something different. What? How are you going to measure what the components of the proton are doing if you don't hit those components with something else? Even should you manage to create some kind of resonance like you suggest, how are you going to detect it? You can't just put a proton under a microscope and look at what the quarks are doing, you know. (And even if you could, that is STILL smashing something, namely a photon, into the proton to observe its components).

You also seem to have missed the point of the DNA example. You claim you don't want to just keep smashing things. The point of the DNA example is precisely that smashing things WAS what they were doing. X-ray crystallography is merely a fancy-sounding term for smashing X-rays into molecules and seeing how they scatter. The X-ray photon is as much a "thing" being smashed into the DNA molecule as a proton smashing into another proton is. Your talk about using X-rays instead of smashing things is really pointless since smashing things is precisely what the X-ray experiments used to determine DNA structure were.

@Eric

I am not 100% sure but I think that Sabine once wrote here an article saying that there are other ideas, but that all the attention and resources keep on going to the same old project.

It would be interesting to have an article with an overview of what is experiments there are and what else could be tried.

@Sean T

You sound a bit hysterical to me and missing the nuances. Of course we 'smash' one thing into another but I was pointing at the differences in force and size between what's hitting what.

@ elle

"all the attention and resources keep on going to the same old project."

that, in the end, is much more down to politics then it is on science - sadly. At the end of the day, the checks written are not done so by scientists, but by politicians because they are state sponsored. I keep returning to space program, 'cause I've studied the history of it more closely then HEP experiments. But same thing happened i.e. once the shuttle has been anounced to retire and new objectives were given to NASA. A whole new host of vehicles and solutions were proposed by the science community, all within reasonable budget and time-frame. And within a span of couple of years... all were discarded. Not because of any technical reasons.. but because powers that be decided that they won't give money to it, and instead opted to re-cycle Apollo. So instead of something new.. you now have a launch vehicle that is more or less of similar size and performance as Saturn V (except the looks) was 50 years ago... and you have a new crew pod called Orion, which is pretty much the same thing as the old capsule was, except with modern computers.

What I'm trying to say is, when it comes to actually financing something... scientists and engineers are left in the second row. Even with CERN and LHC... there is a huge number of people who think/feel that it was/is a waste of time and money. So try selling an idea of something completely different to them. They would be like: "What?!... you don't like your old toy now? You want to build a new one and spend another billion?? Ha ha!!!"

At least that's what I think would happen.

Elle,

Okay, then, how do you overcome the limits posed by quantum theory. As I demonstrated above, probing to smaller and smaller distance scales requires greater energies. These greater energies will necessarily disrupt the system you are trying to observe. I don't see how you can overcome this limit; this is just how the universe works. Certainly, I am not saying that particle accelerators are necessarily the only way to do things, but I still cannot see how you can probe to quark dimensions with a low energy experiment. Low energy implies insufficient spatial resolution to observe the small distances you need to observe.

@Sean T

I don't have a clear answer, perhaps focusing on cooled condensed matter as mentioned earlier might be a better option to discover new physics. But high energy colliders aren't a solution (almost) for sure, if you want to keep on upgrading those you need to have a good theoretical reason and there isn't one. You have alluded here on the need for higher energies, but those that could give us better insights are out of our reach. You could compare it to building rockets to fly to Andromeda, we aren't doing so because the goal isn't realistic, but still we want to build new colliders, cause that's what we are used to. So we need to think about new theoretical models of where to look.

--

@SL

I agree although you can't always blame (external) politics, big organizations are complex, I guess NASA is linked to the military and to the academic community, those are different worlds which might cause for internal tensions, but to be honest I don't know.

The same goes for CERN so many different countries and universities linked to this center, and so much money that needs to spend in equal amounts etc. it has turned this project into a kind of oasis around which a whole community thrives, it needs to go on for plenty of reasons other than science an sich.

But hey people watch tennis, the Olympics or whatever sport and spend huge budgets while it is year in year out almost exactly the same thing. So I guess science can have its Wimbledon, US Open and Roland-Garros all in one.

@ Elle

" it has turned this project into a kind of oasis around which a whole community thrives,"

exactly... and that's why it's great and that's what we should be focusing on. A hub of knowledge, different cultures, new science, exploration, colaboration etc etc...

What I wrote above is that even with such great ideals and notions... there is a large no. of ppl in europe who think LHC is a waste. I am not in that group.. but have heard shouts well enough for years. And that's truly sad.

@SL

Luckily criticism is allowed, not sure from within. Your comment also makes me think of the book 'The Road to Serfdom: With the Intellectuals and Socialism' by Friedrich A. Von Hayek on cerntralized planning.

I'm adding here a summary from a reader on Amazon:

"So the Road to Serfdom is analysis of this intense human desire to organize the world around us through planning in order to achieve some always ill-defined optimum for all. The book clearly demonstrates that the great flaw in this idea is that men can never get together and agree exactly what to plan for or what is optimal. The artist will want resources allocated to the National Endowment for the Arts; the scientist will insist that more be sent to the National Institutes for Health; the farmer will demand that subsidies for corn are the only way society can survive, parents and students will demand bursary, and the poor will clamor for support. This will inevitably lead to conflict as what each man lobbies for is not really an optimum for all but an optimum for himself. The only way these conflicts can be resolved is through a strong central authority that can coerce the cooperation of all the members of society and assign priorities for the allocation of resources. As men will always resist coercion, the applied authority must become increasingly violent to the point of being life threatening in order to impose its central economic will. As the process of organization and planning becomes ever more comprehensive, ultimate authority must eventually be concentrated in the hands of one person, a dictator. In Hayek’s words:

“Most planners who have seriously considered the practical aspects of their task have little doubt that a directed economy must be run on more or less dictatorial lines. That the complex system of interrelated activities, if it is to be consciously directed at all, must be directed by a single staff of experts, and that ultimate responsibility and power must rest in the hands of a commander-in-chief whose actions must not be fettered by democratic procedure .........[planners believe that] by giving up freedom in what are, or ought to be, the less important aspects of our lives, we shall obtain greater freedom in the pursuit of higher values.”"

"This will inevitably lead to conflict as what each man lobbies for is not really an optimum for all but an optimum for himself. "

true...

"The only way these conflicts can be resolved is through a strong central authority that can coerce the cooperation of all the members of society and assign priorities for the allocation of resources. As men will always resist coercion, the applied authority must become increasingly violent to the point of being life threatening in order to impose its central economic will."

I'm not sure that it is the only way, but yes.. that way leads to dictators...

" if it is to be consciously directed at all, must be directed by a single staff of experts, and that ultimate responsibility and power must rest in the hands of a commander-in-chief whose actions must not be fettered by democratic procedure ……"

I use to live in a society like that. In reality... as long as it's driven by a staff of "relatively good intentioned" experts... the system can work.. and can even thrive. Problems arise when they are substituted by self-serving corrupt thieves who's only "expertise" is politics itself. Then these systems fail miserably, in even worse way than any liberal-capitalistic or democracy driven systems.

I honestly don't think there is a system where everyone is happy. And I have long given up pondering on what might be a good system... in a way.. my solution is to let nature take it's course and what happens... happens. Because (at least IMO) it's the society in local terms that chooses how it will be run. Any political system or person can be over-turned no matter how oppresive or liberal it is. No single police or army force can "protect" one individual from a critical mass of common people. Thus, if we suffer under dictatorship, in a sense it's our own fault. Or if we live in a system where everyone has their opinion and that opinion is equal and nothing gets done... again.. it's our own fault.

But we have moved very far from accelerators and probing sub-atomic particles, so I'll stop here :)

Elle:

And that will probably continue to be the case until those 'other ideas' show promise at a small scale. Science is very much like most other human enterprises in that nobody and no ideas (or precious few people/ideas) get their start at the top. Nobody walks on the field with no football experience and becomes QB for the New England Patriots. Nobody get hired to lead Apple without prior management experience. Race car drivers aren't going to use a car in a big race without watching it race first. Likewise, science funding organizations typically won't drop millions of dollars on an idea unless it showed some promise at a previous stage when someone dropped ten thousand or a hundred thousand on it. Large investments and successes are built on smaller investments and successes.

So, before someone drops $millions to build some non-kinetic replacement for CERN, they're going to have to see it work on the bench top level. And then a slightly bigger level. And probably work a bunch of times. So to go back to your point, it makes perfect sense for most of the attention and resources to go to things that are shown to work vs. high risk things that might not work. I would not expect to see lots of money going to such efforts...until they show they can work on a small scale. That's the way humans manage risk and investment not just in science, but in many many other fields too.

And yes, all the accelerators and other technologies currently in use for particle physics had to go through that success-before-success process too. The first cyclotron was 4 inches in diameter. When that worked, they built an 11-inch one that used higher voltages. When that worked, they continued to build progressively bigger and more sophisticated ones, until today we have circular colliders 27km long, synchrotrons, storage rings, and so on. None of them were built "ex nihilo"; scientists (and science funding governments) only chose to build them after they had been shown to be successful at smaller scales.

So, if there are new techniques being explored now, you should expect to see them first show up in academic papers on bench top experiments. Then, if they are successful, maybe a few years later somebody builds a room-sized version. Then if that also shows success, maybe a few years later a couple of universities get together and build a bigger one. And so on, and so on.

@Eric

But they also don't keep a QB on the field when his arm is broken, even when he did great things in the past, anybody would be better.

That's the problem with the LHC there isn't any theoretical 'promise' anymore, and it's not like we don't know what happens beyond certain energies, we do, because of UHECRs. It is just that we don't have enough energy to probe deep enough …

Accelerator physics is far from "broken arm." Thousands of basic research publications come out of it every year. You seem to think that CERN consists of one instrument that exists to do one thing. This is not so. There are IIRC nine different accelerators, and they run 24/7 doing tens or even hundreds of experiments per year.

But more importantly, no matter how broken you think it is, the bottom line is you have no replacement quarterback. Just like MM, or Kasim, or CFT, you have no viable alternative to offer. All complaints, no solutions.

@Eric

Sure, there is every now and then a paper published about a 'promising' bump like the one in 2015. There is nothing wrong with that, researchers collect the data, analyze them, and report on their finds, fine.

But the fact that there were over 500 papers written about it at the phase when it was clear to everyone that it can easily go away with more data is ridiculous.

'To put it in real-world perspective, imagine a land owner mentioning on a party that he might consider financing a bridge over one of his valleys, and group of unrelated architects jumping on that and preparing 50 different detailed bridge projects in stage when the owner isn't even decided if he wants it or not. Except that those architects would probably get fired from their companies for wasting their time.'

I have already mentioned a couple of times condensed matter research and the decay experiment that Ethan wrote about a few weeks ago.

BTW It is not because there is 'no alternative' that you should keep on doing a particular experiment. Imagine …

But here's the thing Elle... particle accelerators are not used only for probing new particles.

From CERN's publications..:

- over 30.000 accelerators in use world-wide

- 44% for radiotherapy (cancer treatment etc..)

- 41% for ion implantation (making better semi-conductors)

- 9% for industrial application (curing materials etc..)

- 4% low energy reasearch (mass spectroscopy, material analysis..)

- 1% medical isotope production (PET and SPECT imaging)

- <1% reasearch

and I bet that things that are discovered in one field can have applications in other fields...

So while we might not learn anything new directly about individual quark.. there are still ways to go with what PA can do for humanity. And as for quarks.. I'm certain there's ways still to go with quark-gluon plasma and experiments on the same.

Elle:

No, that's just science. Probably >95% of all the work published in every field will "go away" at some point due to (a) replacement by more accurate measurements, (b) revised underlying theory, (c) new technology/method that does the same thing better, or (d) change in societal priority to some different field. There is never any guarantee that some bit of research we do or fund will stand the test of time, and there is a very good chance that whatever bit you're considering won't. Because as the saying goes, if we knew the actual answer ahead of time, we wouldn't call it research.

As for your architects metaphor, you're completely off base. Companies do exactly that all the time - it's called business development. Big companies have entire sections that do nothing but such prospective work. When trying to win a government contract (which includes some R&D work), its in fact a legal requirement. A good example of the way your metaphor really works is Trump's border wall - DHS recently put out a request for proposals for vendors who want to build it. Vendors who want that business must then write up detailed architectural plans for what they intend to build, how much it will cost, what resources they'll use, how many people it will employee, etc., etc., etc., all on their own dime. The government legally cannot and will not reimburse them for the money spent preparing such plans. The government then considers all those proposals and picks some, one, or none to fund. The bids for that wall work probably employed far more than a mere 50 architects and probably produced far more than 50 different wall plans. So you see Elle, your '50 architects' counter-argument is no counter-argument at all.

@SL

Again, there are no new particles to be found so that kind of research has become obsolete, there is no probing for new particles.

--

@Eric

I bring up a bridge, you a want to build the wall. I guess it fits perfectly with your drive for conservatism. Let's keep on building bigger machines cause that's what we do, it will be great, it's gonna be huge! Because that's how things worked in the past.